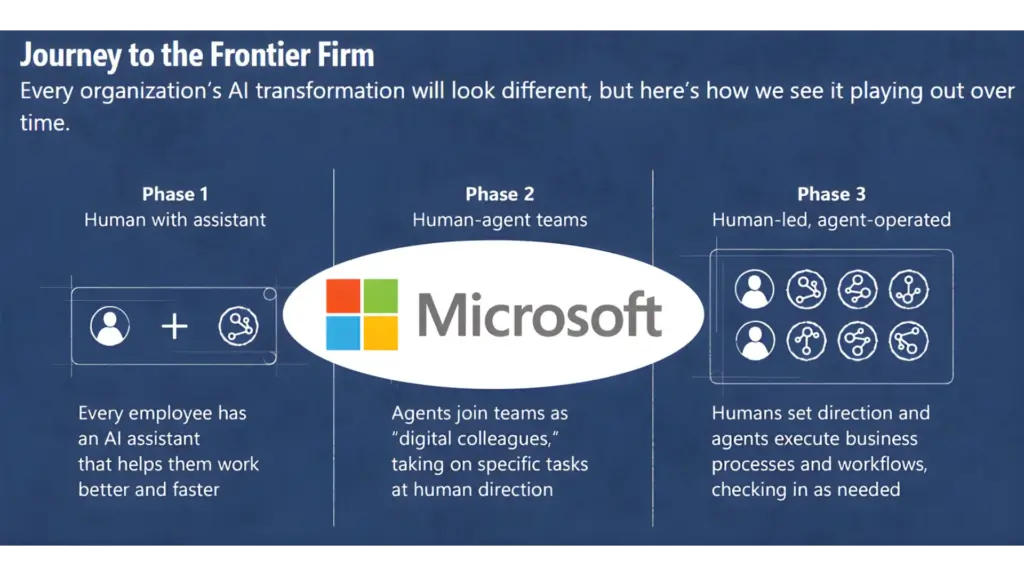

In the rapidly evolving business landscape, a new breed of company is emerging, redefining what it means to be agile, innovative, and scalable. These are the frontier firms, organisations that are not just adopting new technologies but are fundamentally built around them, particularly Artificial Intelligence (AI). As we look towards 2026, it’s clear that AI-first companies are not just competing; they are leading the charge in rapid scaling and market disruption. But what exactly constitutes a frontier firm, and what are the mechanisms behind their accelerated growth?

Consider this staggering statistic: A recent report by McKinsey & Company found that companies that have adopted AI at scale are seeing significant revenue increases, with some reporting up to a 50% jump. This isn’t a minor uptick; it’s a fundamental shift in business performance, driven by intelligent automation, predictive analytics, and hyper-personalised customer experiences. Frontier firms are the vanguard of this transformation, leveraging AI not as an add-on, but as the core engine of their operations.

This blog explains what frontier firms are, how their AI operating models work, and what they mean for businesses in 2026.

What Is a Frontier Firm?

At its heart, a frontier firm is an organisation that places Artificial Intelligence at the absolute centre of its strategy, operations, and culture. This isn’t about having a dedicated AI team or a few AI-powered tools; it’s about an AI-first mindset that permeates every level of the business. These companies are characterised by:

- AI-Native Architecture: Their foundational systems, data infrastructure, and workflows are designed with AI capabilities in mind from the outset. This contrasts with legacy companies that often retrofit AI onto existing, often cumbersome, IT architectures.

- Data-Driven Decision-Making: Frontier firms operate on a continuous loop of data collection, analysis, and action. AI algorithms are integral to processing vast datasets, identifying trends, and informing strategic decisions in real-time.

- Automated Processes: From customer service chatbots to supply chain optimisation, automation is a hallmark of frontier firms. They identify repetitive tasks and processes ripe for AI-driven automation, freeing up human capital for more strategic and creative endeavours. This is a key aspect of why companies need to automate your business.

- Continuous Learning and Adaptation: The AI models powering these firms are not static. They are designed to learn, adapt, and improve over time, allowing the company to remain agile and responsive to market changes. This iterative process is crucial for sustained growth.

- Talent Ecosystem: Frontier firms attract and cultivate talent with AI expertise. Their workforce is adept at collaborating with AI systems, understanding AI outputs, and contributing to the ongoing development of AI capabilities.

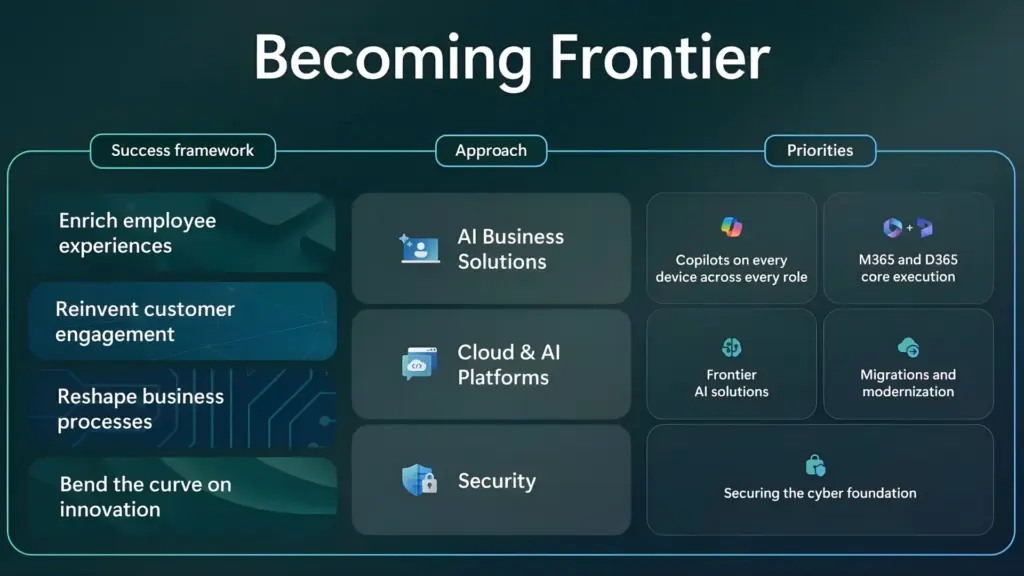

The concept of a frontier firm aligns with the broader trend of digital transformation, but it takes it a significant step further by prioritizing AI as the primary enabler of competitive advantage. As highlighted in discussions around AI leadership strategy, successful leaders understand that AI is not just a tool but a strategic imperative.

The AI Advantage: Fueling Accelerated Scaling

The primary differentiator for frontier firms is their ability to scale faster and more efficiently than their traditional counterparts. AI provides several key levers for this accelerated growth:

1. Enhanced Operational Efficiency

AI excels at automating complex, repetitive, and data-intensive tasks. This ranges from mundane data entry and report generation to sophisticated process automation. For example, AI can manage inventory levels in real-time, predict equipment maintenance needs, and streamline customer onboarding processes. This reduction in manual labor and human error leads to significant cost savings and improved throughput.

Consider the impact on customer service. Instead of relying solely on human agents who can only handle a limited number of queries simultaneously, AI-powered chatbots and virtual assistants can handle a vast volume of customer interactions 24/7. These AI tools can answer frequently asked questions, guide users through troubleshooting steps, and even process simple transactions, escalating complex issues to human agents only when necessary. This not only reduces operational costs but also drastically improves customer satisfaction through faster response times. Companies are increasingly exploring 10 Game Changing Power App Use Cases For Businesses In 2025 that leverage AI for such efficiencies.

2. Data-Driven Insights and Predictive Capabilities

The ability to process and analyze massive datasets is a core strength of AI. Frontier firms use AI to uncover hidden patterns, predict future trends, and gain deep insights into customer behavior, market dynamics, and operational performance. This predictive capability allows them to:

- Anticipate Market Shifts: AI can analyze news, social media, economic indicators, and competitor activities to predict upcoming market trends and potential disruptions, enabling proactive strategy adjustments.

- Personalize Customer Experiences: By analyzing customer data, AI can predict individual preferences and tailor product recommendations, marketing messages, and service offerings, leading to higher conversion rates and customer loyalty.

- Optimize Resource Allocation: AI can forecast demand for products and services, allowing businesses to optimize inventory, staffing, and production schedules, thereby minimizing waste and maximizing profitability.

- Identify New Opportunities: AI can scan vast amounts of data to identify unmet customer needs or emerging market niches that might otherwise go unnoticed.

This sophisticated use of data analytics is a far cry from traditional business intelligence, which often relies on historical data and manual analysis. AI brings a forward-looking, dynamic approach to decision-making. The importance of this predictive power cannot be overstated; it’s a key factor in outmanoeuvring competitors. According to Gartner, AI is poised to be one of the most transformative technologies of the next decade, driving significant business value through enhanced decision-making.

3. Hyper-Personalization at Scale

In today’s competitive market, generic approaches no longer suffice. Customers expect personalized experiences, and AI is the engine that makes this possible at scale. Frontier firms use AI to:

- Deliver Tailored Content: Whether it’s website content, email marketing, or product recommendations, AI can dynamically adjust what each individual user sees based on their past behavior, preferences, and demographic information.

- Offer Proactive Support: AI can identify potential customer issues before they arise and offer proactive solutions or support, enhancing the customer journey.

- Optimize Pricing and Promotions: AI algorithms can analyze individual customer price sensitivity and purchasing history to offer personalized discounts and promotions that are most likely to convert.

This level of personalization fosters deeper customer engagement, increases lifetime value, and creates a significant competitive moat. It moves beyond simple segmentation to true one-to-one marketing and service delivery.

4. Enhanced Innovation and Product Development

AI is not just about optimizing existing processes; it’s also a powerful catalyst for innovation. Frontier firms leverage AI to:

- Accelerate R&D: AI can analyze vast scientific literature, patent databases, and experimental data to identify potential breakthroughs and accelerate the research and development cycle.

- Generate New Product Ideas: AI can analyze market trends and customer feedback to suggest new product features or entirely new product concepts.

- Improve Product Design: AI-powered simulation and generative design tools can help engineers and designers explore a wider range of possibilities and optimize product performance.

The integration of AI into the innovation pipeline allows frontier firms to bring new products and services to market faster and with a higher degree of certainty regarding their market fit. This agility in innovation is a critical factor in their ability to capture new market share.

The Role of AI-First Platforms and Tools

The rise of frontier firms is also facilitated by the increasing availability and sophistication of AI-powered platforms and tools. Companies like Synapx are at the forefront of providing solutions that empower businesses to harness the power of AI. These platforms often offer:

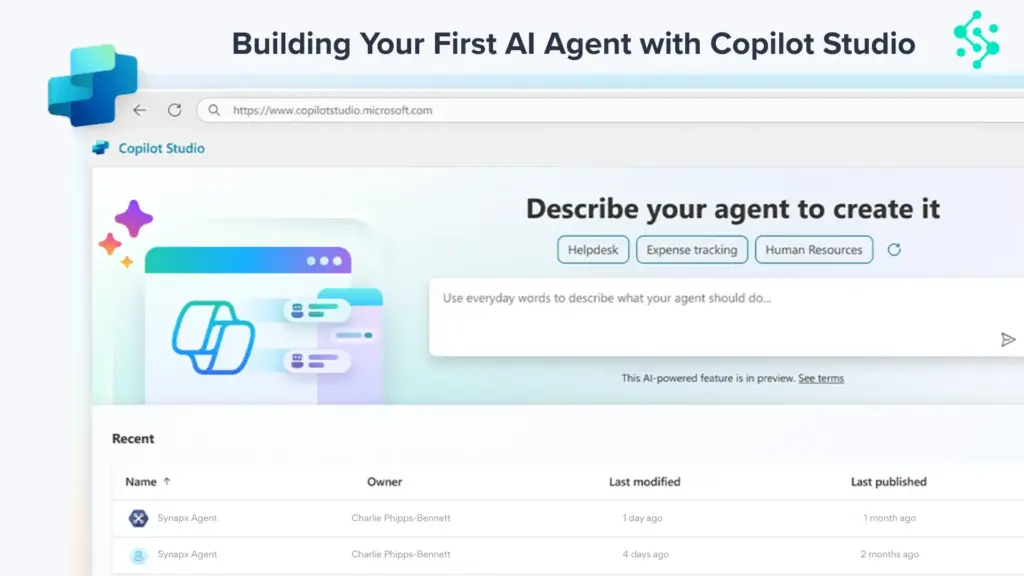

- Low-Code/No-Code AI Development: Tools like Microsoft Copilot Studio (Ai Microsoft Copilot Studio The Future Of Work) are democratizing AI development, allowing individuals with limited coding expertise to build AI-powered applications and workflows. This significantly speeds up the deployment of AI solutions.

- Integrated AI Services: Cloud providers and specialized AI companies offer a suite of pre-built AI services (e.g., natural language processing, computer vision, machine learning) that businesses can easily integrate into their existing systems.

- AI Orchestration Tools: These tools help manage and coordinate multiple AI models and workflows, ensuring seamless integration and efficient operation.

- Data Management and Governance: Robust platforms provide the necessary infrastructure for collecting, cleaning, storing, and governing the vast amounts of data required to train and operate AI systems effectively.

By leveraging these platforms, frontier firms can bypass the significant upfront investment and complex infrastructure development that was once a barrier to AI adoption, allowing them to focus on strategic implementation and value creation.

Challenges and Considerations for Frontier Firms

While the advantages are clear, building and operating a frontier firm is not without its challenges:

- Data Quality and Bias: AI models are only as good as the data they are trained on. Ensuring high-quality, unbiased data is paramount to avoid discriminatory or inaccurate outcomes. The responsible use of AI is a growing concern, and organizations must implement robust data governance and ethical AI frameworks. IBM’s Trustworthy AI provides guidance on building responsible AI systems.

- Talent Acquisition and Retention: The demand for skilled AI professionals is exceptionally high. Frontier firms must invest heavily in attracting, training, and retaining top AI talent.

- Ethical and Regulatory Landscape: The ethical implications of AI, such as data privacy, job displacement, and algorithmic transparency, are subjects of ongoing debate and evolving regulation. Frontier firms must stay abreast of these developments and ensure compliance. The National Institute of Standards and Technology (NIST) is actively involved in developing AI standards and guidelines.

- Integration Complexity: While platforms are simplifying AI development, integrating AI seamlessly into existing business processes and legacy systems can still be a complex undertaking. This often requires a strategic approach to process re-engineering. The need to automate 8 Business Processes You Need To Automate Today is often a precursor to successful AI integration.

- Security: AI systems, like any digital technology, are vulnerable to cyber threats. Protecting AI models and the data they use is a critical security concern.

The Future is AI-First

As we move further into the 2020s and beyond, the distinction between traditional businesses and frontier firms will likely become more pronounced. Companies that fail to embrace an AI-first strategy risk falling behind, unable to match the speed, efficiency, and innovation of their AI-powered competitors.

The journey to becoming a frontier firm involves a fundamental rethinking of business strategy, operational models, and organizational culture. It requires a commitment to data, a willingness to automate, and a vision for how AI can unlock new levels of performance and growth. The insights gained from events like Ai In Action Synapx Event Future Of Work underscore the urgency and opportunity presented by AI.

For businesses looking to thrive in 2026 and beyond, the message is clear: the future is AI-first, and the frontier firms are leading the way. Understanding their strategies and the underlying AI capabilities is no longer optional; it’s essential for survival and success.

Key Takeaways

- Frontier firms are organizations built around an AI-first mindset, integrating AI into their core strategy and operations.

- AI enables accelerated scaling through enhanced operational efficiency, data-driven insights, hyper-personalization, and faster innovation.

- AI-powered platforms and tools are democratizing AI development and implementation.

- Key challenges include data quality, talent acquisition, ethical considerations, integration complexity, and security.

- Adopting an AI-first strategy is becoming crucial for competitive advantage and long-term success.

Ready to Begin Your Frontier Journey?

Synapx helps organisations move from AI experimentation to Frontier Firm operating models through Microsoft Power Platform, Copilot Studio, and Microsoft Fabric. If you want to understand where your organisation sits on the maturity curve and what it would take to accelerate, get in touch.

Our approach combines deep technical expertise with pragmatic change management, ensuring AI becomes foundational to your operations, not just another tool in the stack.

We help organisations:

- Assess current state and AI readiness

- Design practical transformation roadmaps

- Implement enabling infrastructure that scales

- Build and deploy AI agents across functions

- Establish governance frameworks that enable speed

- Develop internal capabilities for sustained evolution

Contact Synapx to discuss how we can accelerate your journey to becoming a Frontier Firm in 2026. Let’s talk about where you are and where you need to go.